Welcome

As shown above, text rendering is now implemented enough to display the introduction to the game. It’s kind of neat, because every time I had tested the game ever since implementing the logic system, it was trying to display this introduction sequence. It was just calling a function that couldn’t render text until now. Similarly, after these text sequences, it loads the first level from story, getting the basics of the room set up, but it can’t display anything until I implement the tile drawing (and some ‘universe’ properties for getting relative positions and whatnot).

There are still a few things to do for text rendering, but it can even handle ‘formatted’ text, which means the string has denoted at the start that it will use automatic page breaks and spacing. For example:

For this kind of text sequence, the game will actually check the distance before drawing a word, to see if the word will fit on the current line. If not, it will start a new line, and if there is no room there either it will clear the screen and start at the top. Very neat stuff.

The process of making text render is surprisingly complicated, but not for the reason you might think. The actual text parsing is fairly straightforward. It is the individual letter drawing that gets complex. To understand why, I need to mention first what’s going on with the text drawing at all.

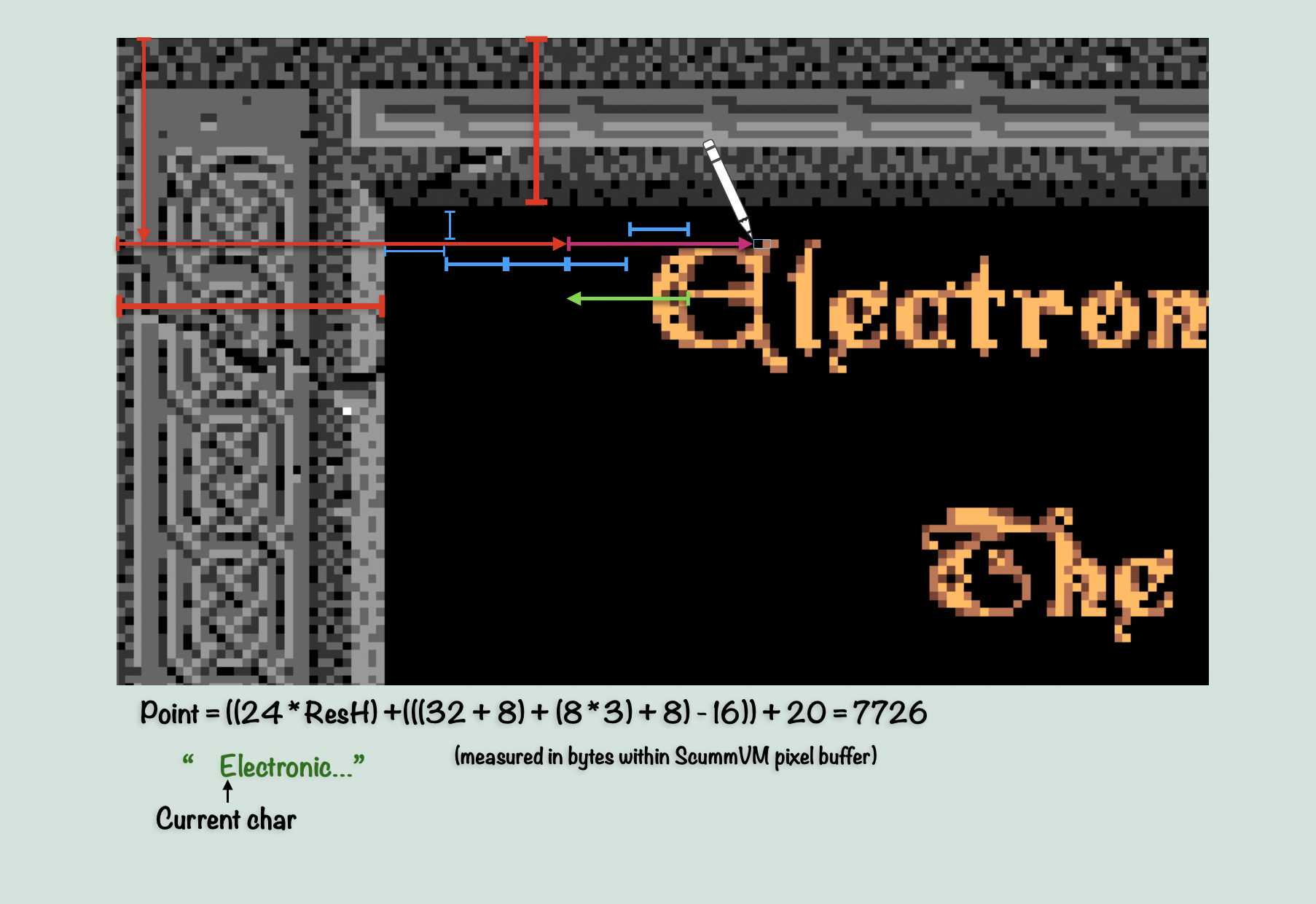

For any given text, the game ‘writes’ it out to the screen. Whether it is the intro text, where it fades in to a fully rendered text screen, or it’s a dialog sequence that draws across the screen at a certain speed. In all cases, the same system is used. To draw a letter, whether it’s part of a string, or just a character on its own (ex. the password ‘certificate’, or the health bar), the game uses a virtual ‘Pen’ to do it. By which I mean, the source specifically calls it PenX and PenY. I think this refers to two things. 1. The coordinates are relative, and 2. Each letter is drawn individually, in a natural way. The first is pretty simple, ‘Pen’ coordinates are relative to the edges of the viewport. So any coordinates you give to the character drawing function will automatically assume the edges of the screen are included. The second, refers to both the fact that each letter drawn gets a potential delay (which can be influenced by what buttons are held or not held down), and that letters are drawn relative to their own size, and the size of the other letters. For example, you can see that the letter ‘i’ is much thinner than ‘m’, but it fits snugly between other letters as if they were a uniform size. The thing is, when you put together all of the sprite offsets and pen movements, there is a ton going on to draw a single pixel of a letter. The process looks something like this:

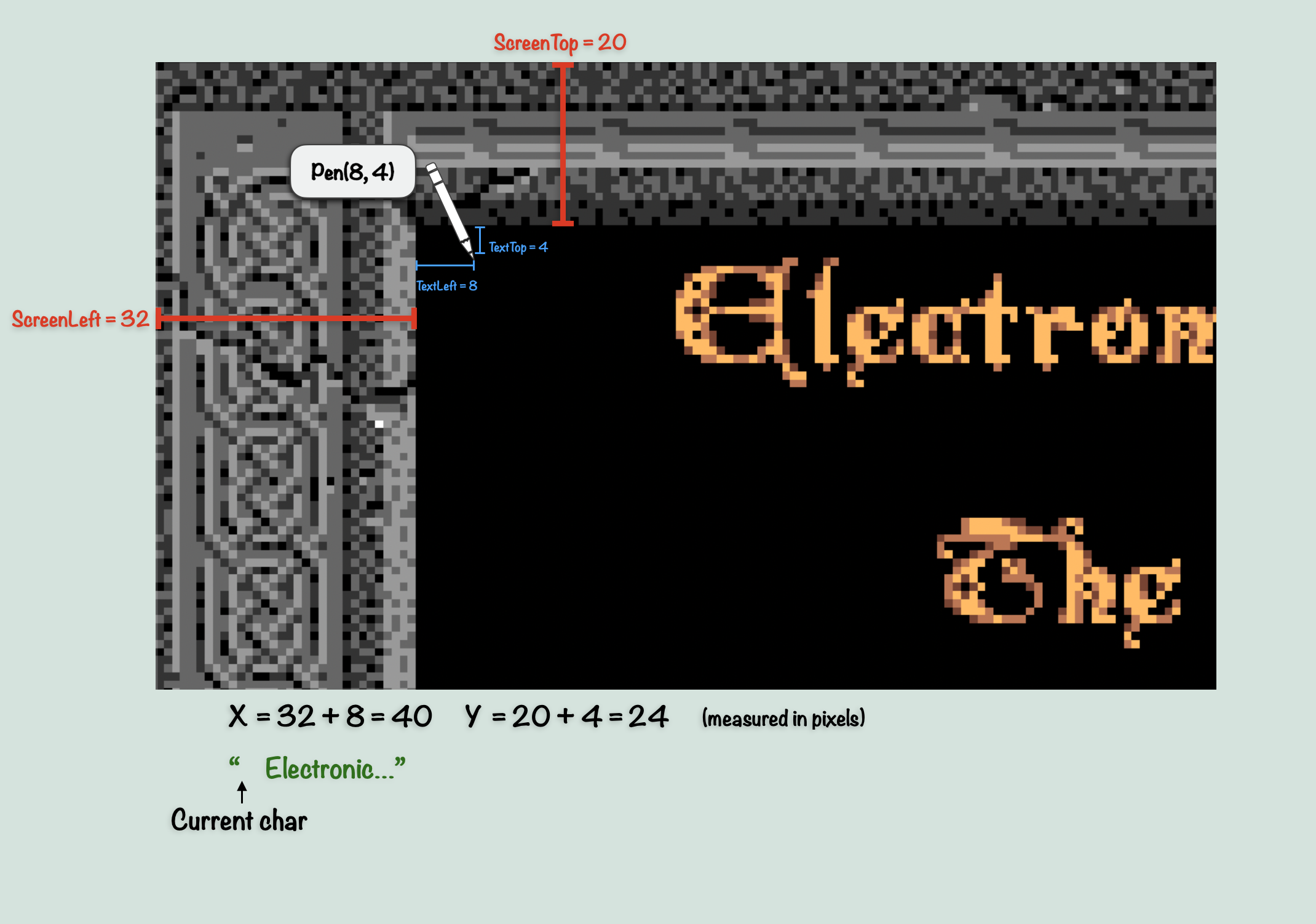

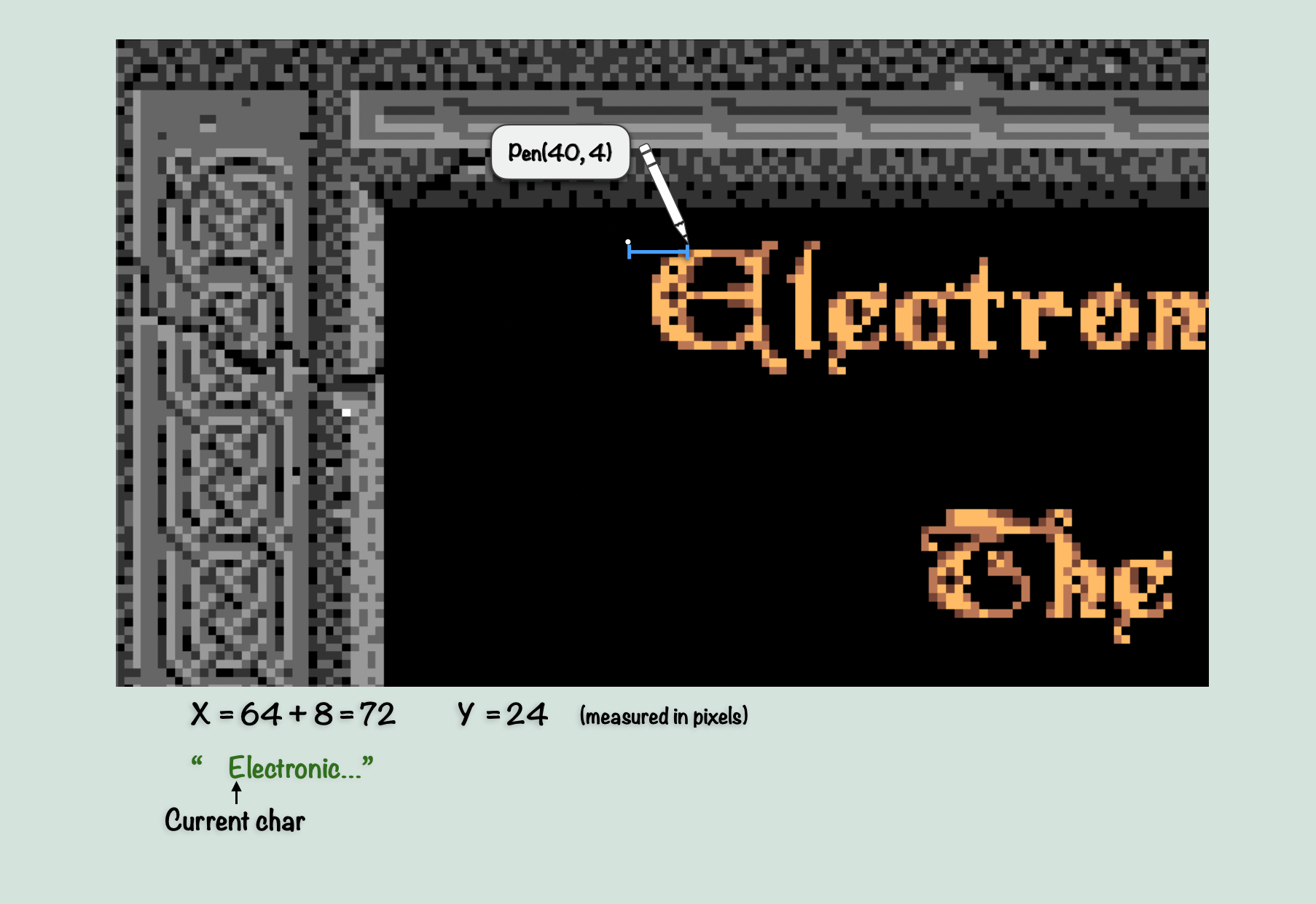

Starting with a Pen of (0,0), a text sequence starts by clearing the screen (drawing a black rectangle at the viewport), and then setting the penX to 8 and penY to 4. This is just to give any text a bit of space. Keep in mind that the screen edges get added on when the Pen becomes coordinates for the sprite drawing function.

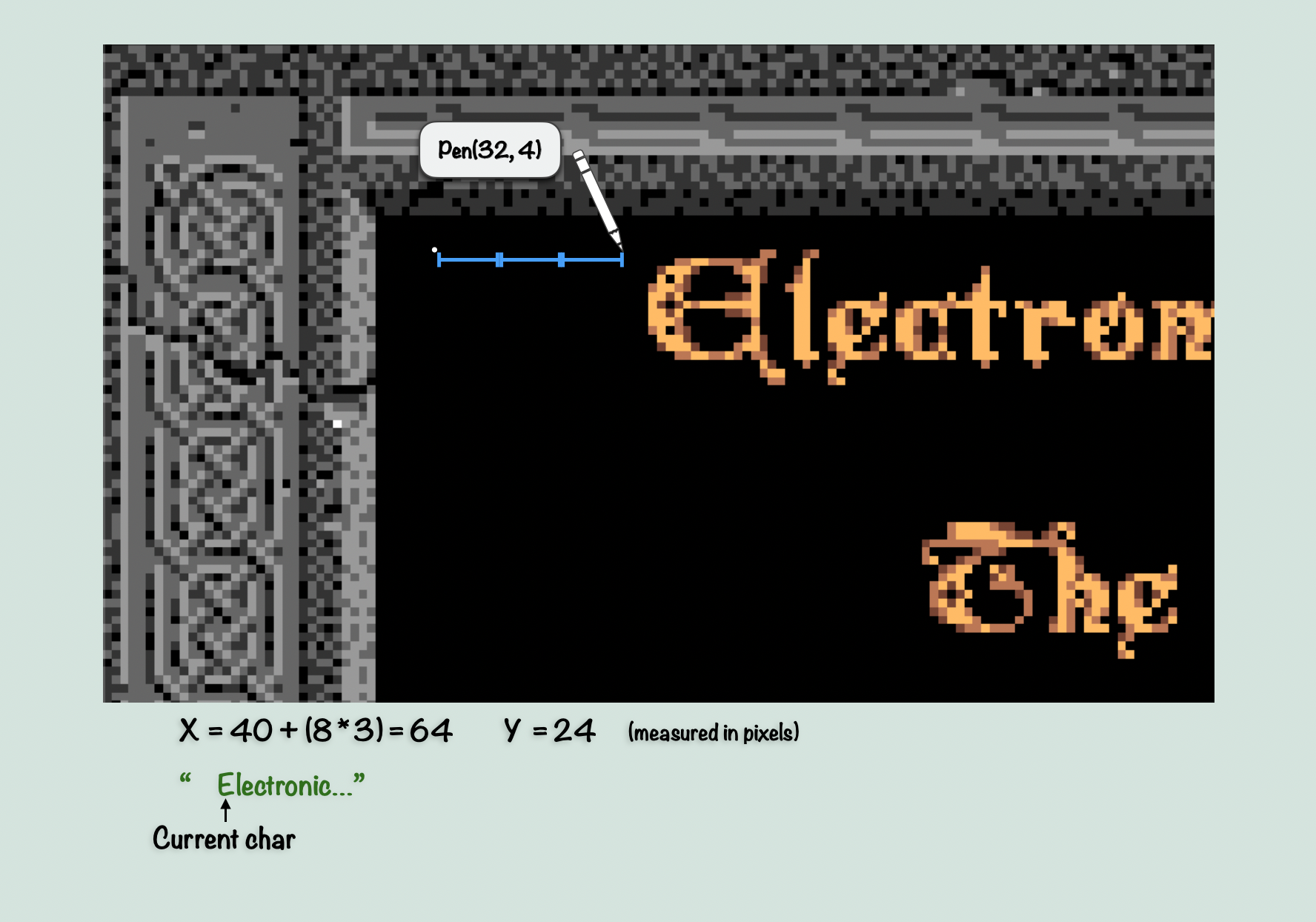

After this, we start the string. The first 4 characters of the intro string are spaces, which simply add 8 pixels to the PenX position.

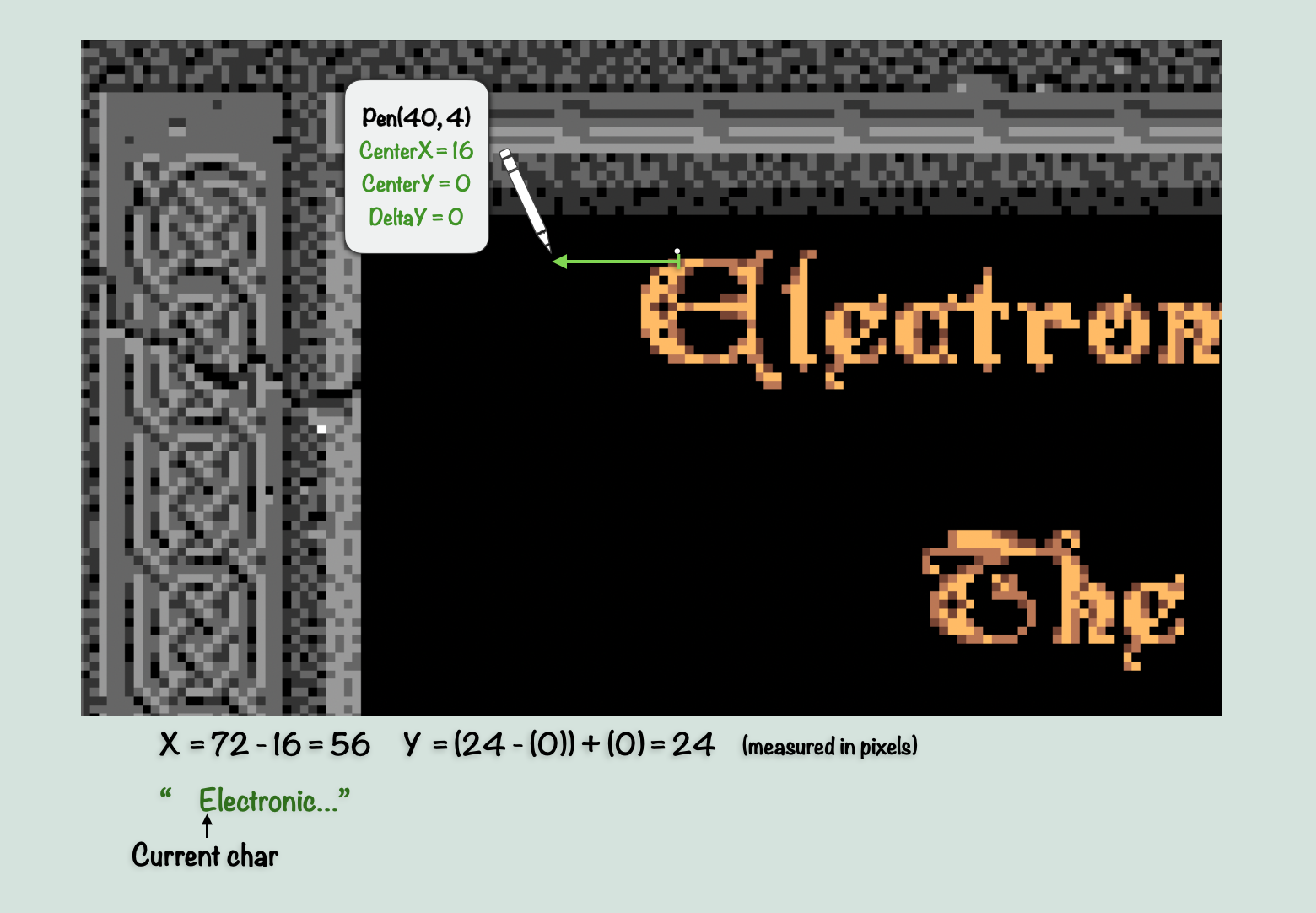

Next, the letter is a capital E, and all capitals get 8 pixels of extra space added to the X. This is because every letter will add 8 pixels to the PenX after they have drawn, to cover the basic width of a letter. However wide letters like m and w add an additional 8, and this applies again if the letter is a capital, as they are twice as large in this font. Like I mentioned before however, some letters are smaller, and actually subtract a number of pixels from the Pen. For example ‘t’ subtracts 3 pixels to make sure it is drawn closer to the last letter. This is all taken into account in the sprite sizes, but we’ll see that in a moment.

Now we are about to draw the sprite, but we’re in the middle of where we know the letter gets drawn, why is that? Well along with the Pen and screen parameters, the sprites have their own individual offsets and spacing. For letters, they have a centre X of 16 pixels. This means that before drawing, the sprite game will subtract 16 pixels from whatever X position it is at.

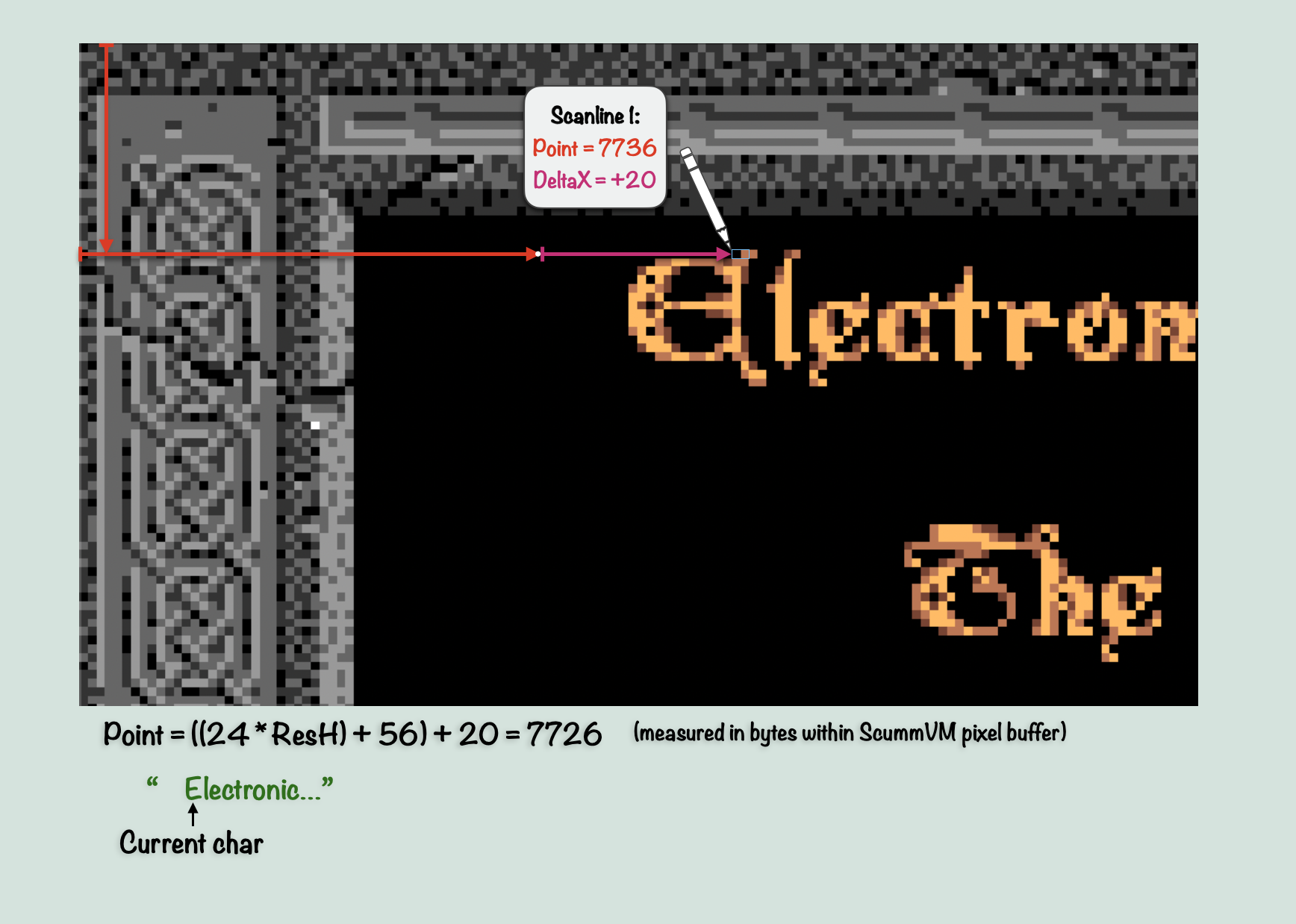

This leaves us pretty far away from where we know the sprite draws. But before we solve that, the next step is turning the X and Y coordinates into a pixel index (the original game used a mix of byte and pixel positions, and had to convert between them. This is because the virtual screen buffer where sprites draw contains two pixels per byte, for more info on that see the post just before this one. For ScummVM, the equivalent screen buffer is stored as one pixel per byte). After this, we can start looking at the sprite data itself, which begins with a scan line offset of 20 pixels. This offset puts our index right where it needs to be to draw the first two pixels. Following that, each scan line defines the difference in position to get to the first pixel of the next line.

Putting this all together, we can see that there are many steps to translate a Pen position, to the actual sprite being drawn.

But it ensures that text renders like that, and not like this:

Next

This is now the final week (19 – 26), so this week will be both working on the next code (which I’ll mention in a moment), and also cleaning up the code a bit, getting the commits uploaded, and then making the final GSOC blog post for the submission. The code that I will try to get working this week is for drawing the map tiles (which does not use the sprite function at all, contrary to what I thought before due to sprite properties of some kind being referenced there). This first requires loading in the ‘maze’ data, and then implementing the drawing routines for tiles. These are quite complex, and ultimately I may need to just write a function for now that interprets the data on its own, and fully implement the source process in the future. Either way, I will do what I can in the time remaining. Thanks for reading!